Chapter 4

Frequentist Inference

Frequentist inference is the process of determining properties of an underlying distribution via the observation of data.

Point Estimation

One of the main goals of statistics is to estimate unknown parameters. To approximate these parameters, we choose an estimator, which is simply any function of randomly sampled observations.

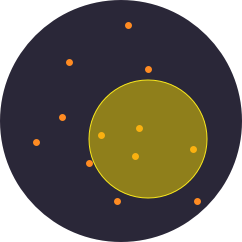

To illustrate this idea, we will estimate the value of \( \pi \) by uniformly dropping samples on a square containing an inscribed circle. Notice that the value of \( \pi \) can be expressed as a ratio of areas. $$\begin{matrix}S_{circle} = \pi r^2\\S_{square} = 4r^2\end{matrix} \implies \pi = 4 \frac{S_{circle}}{S_{square}}$$ We can estimate this ratio with our samples. Let \( m \) be the number of samples within our circle and \( n \) the total number of samples dropped. We define our estimator \( \hat{\pi} \) as: $$\hat{\pi} = 4 \frac{m}{n}$$ It can be shown that this estimator has the desirable properties of being unbiased and consistent.

|

\( m= \) 0.00 \( n= \) 0.00 |

\( \hat{\pi}= \) |

Confidence Interval

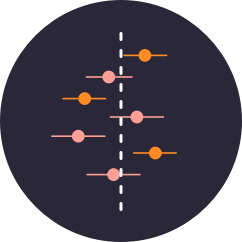

In contrast to point estimators, confidence intervals estimate a parameter by specifying a range of possible values. Such an interval is associated with a confidence level, which is the probability that the procedure used to generate the interval will produce an interval containing the true parameter.

Choose a probability distribution to sample from.

Choose a sample size \((n)\) and confidence level \((1-\alpha)\).

Start sampling to generate confidence intervals.

This visualization was adapted from Kristoffer Magnusson's fantastic visualization of confidence intervals.

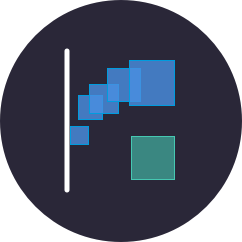

The Bootstrap

Much of frequentist inference centers on the use of "good" estimators. The precise distributions of these estimators, however, can often be difficult to derive analytically. The computational technique known as the Bootstrap provides a convenient way to estimate properties of an estimator via resampling. In this example, we resample with replacement from the empirical distribution function (which is itelf generated by sampling once from the population) in order to estimate the standard error of the sample mean.

Choose a probability distribution from which we will sample once to generate the empirical distribution function.

Choose a sample (and resampling) size \((n)\) and sample from your chosen distribution.

Resample to get an idea of the spread of the sample mean's distribution.